- Blog

- Insidious 3 free full movie online 123movies

- Install printer hp 1315 all in one

- Microsoft office home and business 2019 download

- Kerbal space program free science save modification

- Apache tomcat 8 download and install

- Old internet explorer 8 download

- Saturday night live night at the roxbury

- Age of empires 2 mac free download full game

- Xsplit download linux

- Apple serial number

- Download huawei modem unlocker v5-8-1

- Qualcomm atheros ar3012 bluetooth 4-0 samsung driver

- How to use virtualbox to compiler

- How to use virtualbox to compiler install#

- How to use virtualbox to compiler archive#

- How to use virtualbox to compiler full#

- How to use virtualbox to compiler download#

For convenience, copy the tarball archive to your Desktop.

How to use virtualbox to compiler download#

Next, download your custom kernel (either the tar.gz or tar.xz file) from.

How to use virtualbox to compiler install#

Secondly, you will need to install a few packages before you can proceed - sudo apt-get update sudo apt-get install git fakeroot build-essential ncurses-dev xz-utils libssl-dev bc This will display the current kernel version of your linux distro.

The latest stable kernel currently is 4.15.4, which is available at However, I will be detailing the steps required to compile the 4.7.1 version of the Linux Kernel on your virtual machine running Ubuntu 16.04.3 in particular for this post.įirst of all, boot Ubuntu on your VM, login, open Terminal and run the following command - uname -r

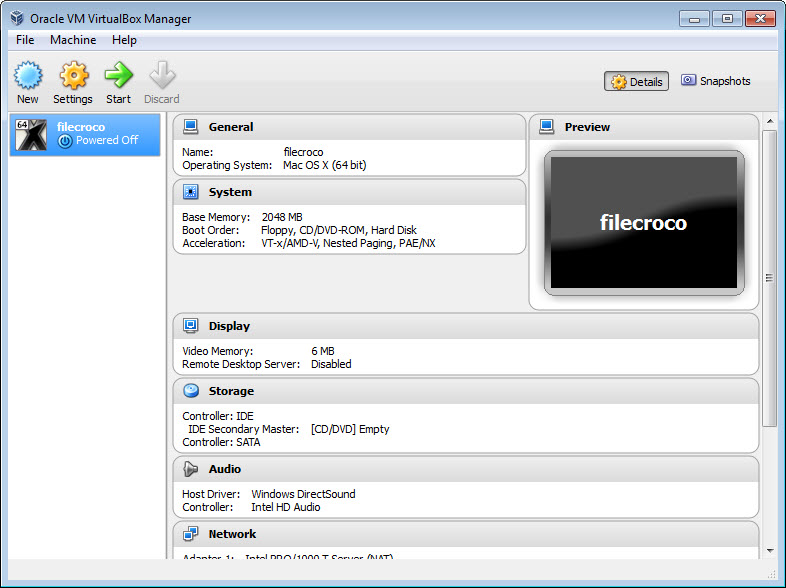

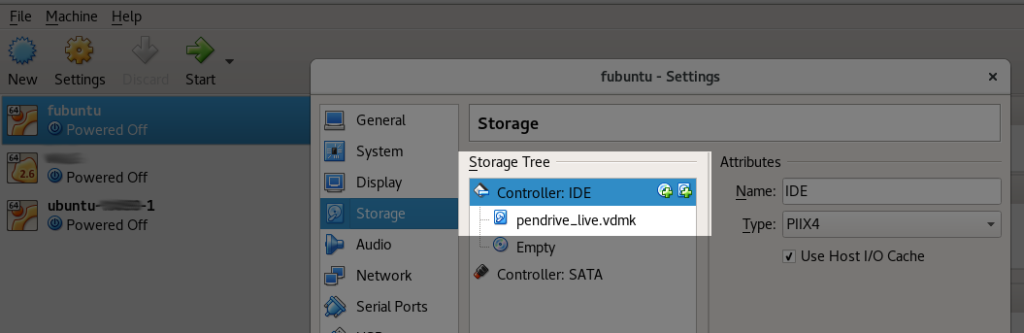

So deal with it.īefore we begin, I’m assuming that you already have a Laptop/PC with a Virtual Machine Application (such as VMware Fusion or Workstation, VirtualBox, Parallels Desktop, etc.) installed, running a Linux Distribution ( Ubuntu, Linux Mint, etc.). Knowing the same, you are welcome to scroll right to the end of this post to link yourself to a few references which may satisfy your expectation of rigour or technicality. Note: This post is not exhaustive by nature, and can rather be considered to provide a minimalistic approach. For Intel, you can find it here.Compiling a Custom Linux Kernel on a Virtual Machine You can find the CUDA tuning information in the CUDA HPL version. I normally select NBs to be multiple of Ns, so there is no performance dropoff on towards the end of the calculation.įor the rest of the parameters, you can read about it here. Larger NBs close to 1000 or more than 1000 is good for GPUs and accelerators. NBs in the low 100 or 200 is good for multiple nodes. N = sqrt((Memory Size in Gbytes * 1024^3 * Number of Nodes) / Double Precison(8)) * percentage N should typically be around 80-90% of the size of total memory.

If you linked everything correctly, then in the bin// directoy, there should be a. Lastly, you can specify your compiler(cc) and compiler flags(ccflags). If the blas file is a *.so file, you can follow the steps above to add it to your environment.įor HPL_OPTS, add -DHPL_DETAILED_TIMING, for better analysis of tuning the HPL.dat file. LAinc should specify the include directory, if you need to include it LAdir should specify the exact location of the BLAS binary. Then, reload the file and now it can be found every time you log on to your system. vim ~/.bashrcĪdd the following to the end of the file: export $LD_LIBRARY_PATH=/path to mpi/lib:$LD_LIBRARY_PATH ** Note: if you are using a *.so instead of *.a, like the one in the image, then you need to add the library path to your environment. MPlib is similar to the image above, except libmpich.a would depend on the MPI version you installed. MPdir should specify the exact file path to the version of MPI you want to use, up to the root where include, lib, and bin are located. Next is specifying the location of your MPI files and binaries.

How to use virtualbox to compiler full#

Typically you should specify the full path to your HPL directory. Here, you may or may not need to modify TOPdir. For CUDA and Intel, Make.CUDA and Make.intel64 are already created for you. I suggest Make.Linux_ATHLON_CBLAS, since that is the closest to generic systems. The first step is to make a copy of an existing makefile in the setup/ folder and place this in the root directory of HPL.